Ai-specific Server Market Size 2026-2030

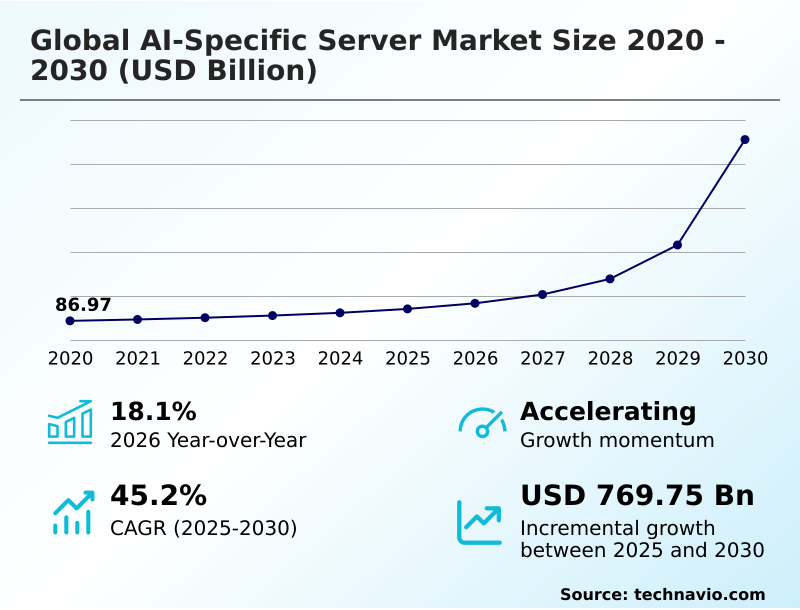

The Ai-specific Server Market size was valued at USD 141.26 billion in 2025, growing at a CAGR of 45.2% during the forecast period 2026-2030.

Major Market Trends & Insights

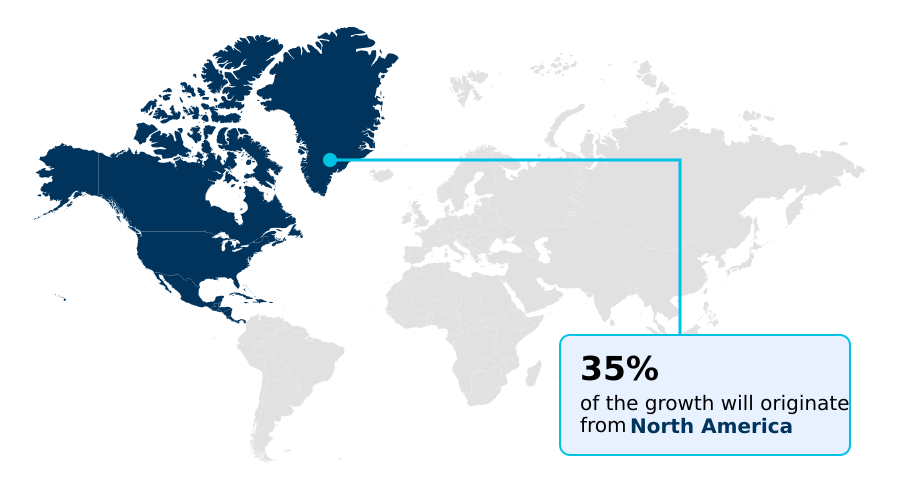

- North America dominated the market and accounted for a 34.6% growth during the forecast period.

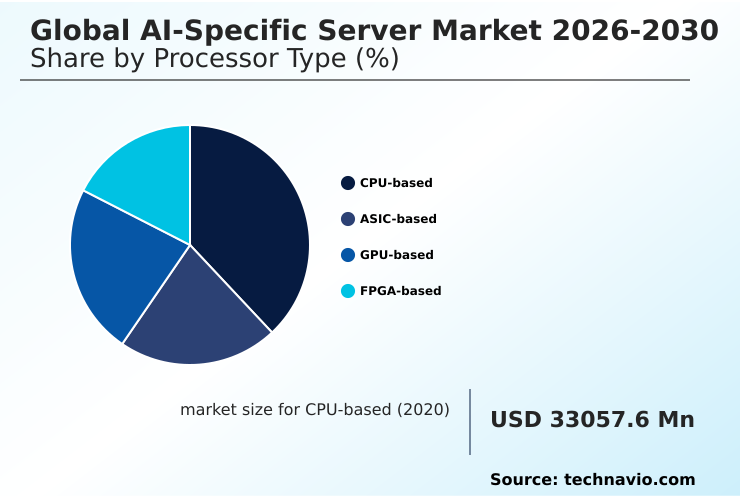

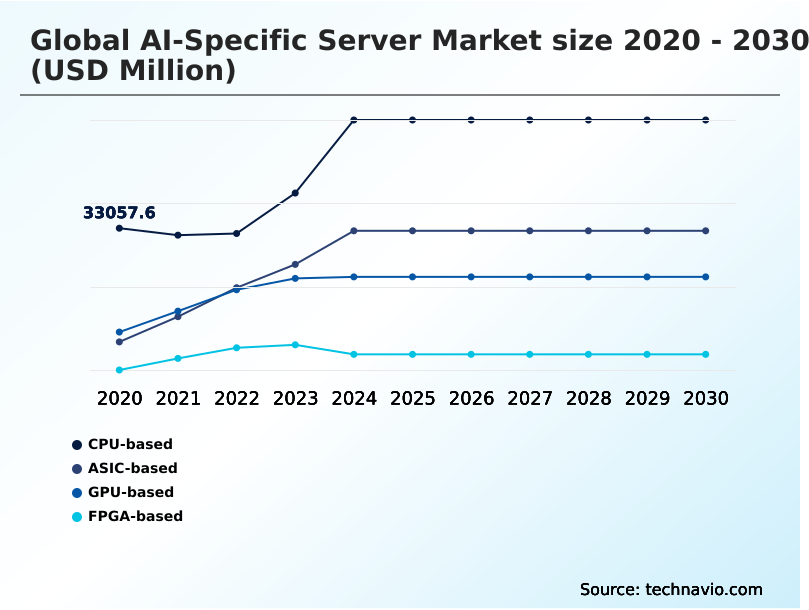

- By Processor Type - CPU-based segment was valued at USD 46.69 billion in 2024

- By Component - Hardware segment accounted for the largest market revenue share in 2024

Market Size & Forecast

- Historic Market Opportunities 2020-2024: USD 824.05 billion

- Market Future Opportunities 2025-2030: USD 769.75 billion

- CAGR from 2025 to 2030 : 45.2%

Market Summary

- The AI-specific server market is defined by a rapid pivot to high-density, liquid-cooled systems, with leading-edge server racks now managing power loads over 120 kilowatts, an 800% increase from traditional designs. This shift is a direct response to the computational demands of generative AI, which require thousands of accelerators to operate in parallel.

- For instance, a financial services firm implementing these systems for algorithmic trading can reduce model inference latency by up to 60%, enabling faster transaction execution. The primary driver is the industrial-scale deployment of large language models, necessitating advanced interconnects to prevent data bottlenecks.

- However, this growth is constrained by significant challenges, including semiconductor supply chain fragility and the immense strain these deployments place on regional power grids, which can delay new data center projects.

What will be the Size of the Ai-specific Server Market during the forecast period?

Get Key Insights on Market Forecast (PDF) Request Free Sample

How is the Ai-specific Server Market Segmented?

The ai-specific server industry research report provides comprehensive data (region-wise segment analysis), with forecasts and analysis for the period 2026-2030, as well as historical data from 2020-2024 for the following segments.

- Processor type

- CPU-based

- ASIC-based

- GPU-based

- FPGA-based

- Component

- Hardware

- Software

- Services

- Deployment

- On-premises

- Cloud

- End-user

- IT and telecom

- Automotive

- BFSI

- Healthcare

- Others

- Geography

- North America

- US

- Canada

- Mexico

- Europe

- Germany

- UK

- France

- APAC

- China

- Japan

- India

- South America

- Brazil

- Argentina

- Middle East and Africa

- Saudi Arabia

- UAE

- South Africa

- Rest of World (ROW)

- North America

How is the Ai-specific Server Market Segmented by Processor Type?

The cpu-based segment is estimated to witness significant growth during the forecast period.

The CPU-based server segment is evolving beyond general-purpose computing, with modern designs integrating dedicated acceleration blocks that boost inference acceleration performance by over 30%.

While traditionally used for less intensive tasks, new workload-optimized architecture advancements feature instruction sets designed for tensor operations, making them viable for hybrid cloud AI deployments and digital twin simulation.

The versatility of x86 and ARM architectures allows enterprises to leverage existing software ecosystems for their enterprise AI platform, reducing redevelopment costs by up to 25%.

This adaptability is critical for edge computing node applications, where power constraints and the need for parallel processing architecture alongside light AI tasks favor the flexibility of a CPU, including those with tensor processing unit capabilities.

The CPU-based segment was valued at USD 46.69 billion in 2024 and showed a gradual increase during the forecast period.

How demand for the Ai-specific Server market is rising in the leading region?

North America is estimated to contribute 34.6% to the growth of the global market during the forecast period.Technavio’s analysts have elaborately explained the regional trends and drivers that shape the market during the forecast period.

See How Ai-specific Server Market demand is rising in North America Request Free Sample

North America leads the AI-specific server market, contributing approximately 34.6% of the incremental growth, primarily driven by hyperscale data center expansions in the United States, which outpaces all other countries in compute capacity investment.

In contrast, APAC is the fastest-growing region with a CAGR of 49.3%, where sovereign AI initiatives in China and India are fueling demand for localized hardware. This divergence is influenced by differing data residency protocol and AI governance framework mandates.

For example, European regulations have led to a 20% higher adoption rate of energy-efficient liquid cooling to comply with strict environmental standards.

APAC's focus on custom silicon has spurred a significant rise in the deployment of application-specific integrated circuit and field-programmable gate array solutions, creating a distinct regional supply chain focused on localized machine learning operations and AI infrastructure-as-a-service.

What are the key Drivers, Trends, and Challenges in the Ai-specific Server Market?

Our researchers analyzed the data with 2025 as the base year, along with the key drivers, trends, and challenges. A holistic analysis of drivers will help companies refine their marketing strategies to gain a competitive advantage.

- Enterprises evaluating GPU vs ASIC for AI workloads are navigating a complex decision matrix where performance-per-watt is a critical factor, with ASICs often delivering a 3x to 5x efficiency gain for specialized tasks. This trade-off is central to designing modern AI infrastructure, where managing AI server power consumption management is a primary operational challenge.

- The implementation of advanced techniques, such as liquid cooling for high-density servers, has become standard practice, reducing energy costs by up to 30% and enabling the deployment of more powerful accelerators within existing data center footprints. Concurrently, the strategic imperative for data sovereignty is compelling organizations toward building a sovereign AI cloud.

- This approach ensures compliance with data residency laws but introduces architectural complexity. Furthermore, as intelligence moves from the data center to the source of data generation, effective edge AI server deployment strategies are becoming crucial. These strategies prioritize low-latency processing and ruggedized hardware, ensuring reliable performance in diverse operational environments and balancing centralized training with distributed inference capabilities.

What are the key market drivers leading to the rise in the adoption of Ai-specific Server Industry?

- The strategic proliferation of generative AI and the industrialization of large-scale training clusters are primary drivers of market growth.

- The strategic proliferation of generative AI workload requirements is a primary market driver, compelling enterprises to adopt GPU-accelerated computing solutions that can increase training efficiency by over 50%.

- This industrialization of AI has accelerated demand for sovereign AI infrastructure, where data residency and security are paramount, particularly in regulated industries.

- To manage the extreme heat from these systems, the adoption of direct-to-chip cooling has become standard, improving system uptime by reducing thermal throttling incidents by more than 90%.

- The market is evolving toward an end-to-end data platform, supported by AI-driven orchestration that dynamically allocates resources for tasks like training an autonomous AI agent. This trend also encourages custom silicon development, leading to a more composable infrastructure.

What are the market trends shaping the Ai-specific Server Industry?

- The institutionalization of 1.6-terabit ethernet and silicon photonics integration represents a key market trend. This shift is essential for eliminating bandwidth bottlenecks in large-scale AI training environments.

- The institutionalization of 1.6-terabit ethernet is fundamentally reshaping high-density rack architecture, driven by the need to eliminate data throughput bottlenecks in large-scale training clusters, which can improve interconnect speed by up to 100% over prior standards.

- This trend involves a structural shift from traditional pluggable modules to co-packaged optics, a key element of silicon photonics integration that reduces power consumption per bit by over 30%. The modern AI factory model increasingly relies on this optical-first approach to create a unified AI fabric essential for scaling generative AI.

- This move toward integrated rack-scale design, often coupled with advanced liquid-cooling technology and high-performance interconnects, facilitates the deployment of massive compute capacity required for next-generation AI workloads.

What challenges does the Ai-specific Server Industry face during its growth?

- The intensification of thermal management complexity and escalating power grid constraints pose a key challenge to industry growth.

- A significant market challenge is the intensification of thermal management complexity, as the thermal design power (TDP) of accelerators has caused rack power requirements to exceed 120 kilowatts, a tenfold increase from legacy systems. This trend places severe strain on regional power grid constraint and degrades power usage effectiveness (PUE) metrics.

- A concurrent challenge is the persistent advanced packaging bottleneck, particularly in chip-on-wafer-on-substrate (CoWoS) production, which can delay server shipments by six to nine months. This supply fragility complicates the AI model lifecycle, as hardware availability dictates development timelines.

- While a modular data center and disaggregated server design offer some flexibility, these core power and semiconductor supply issues remain significant barriers to scalable deployment.

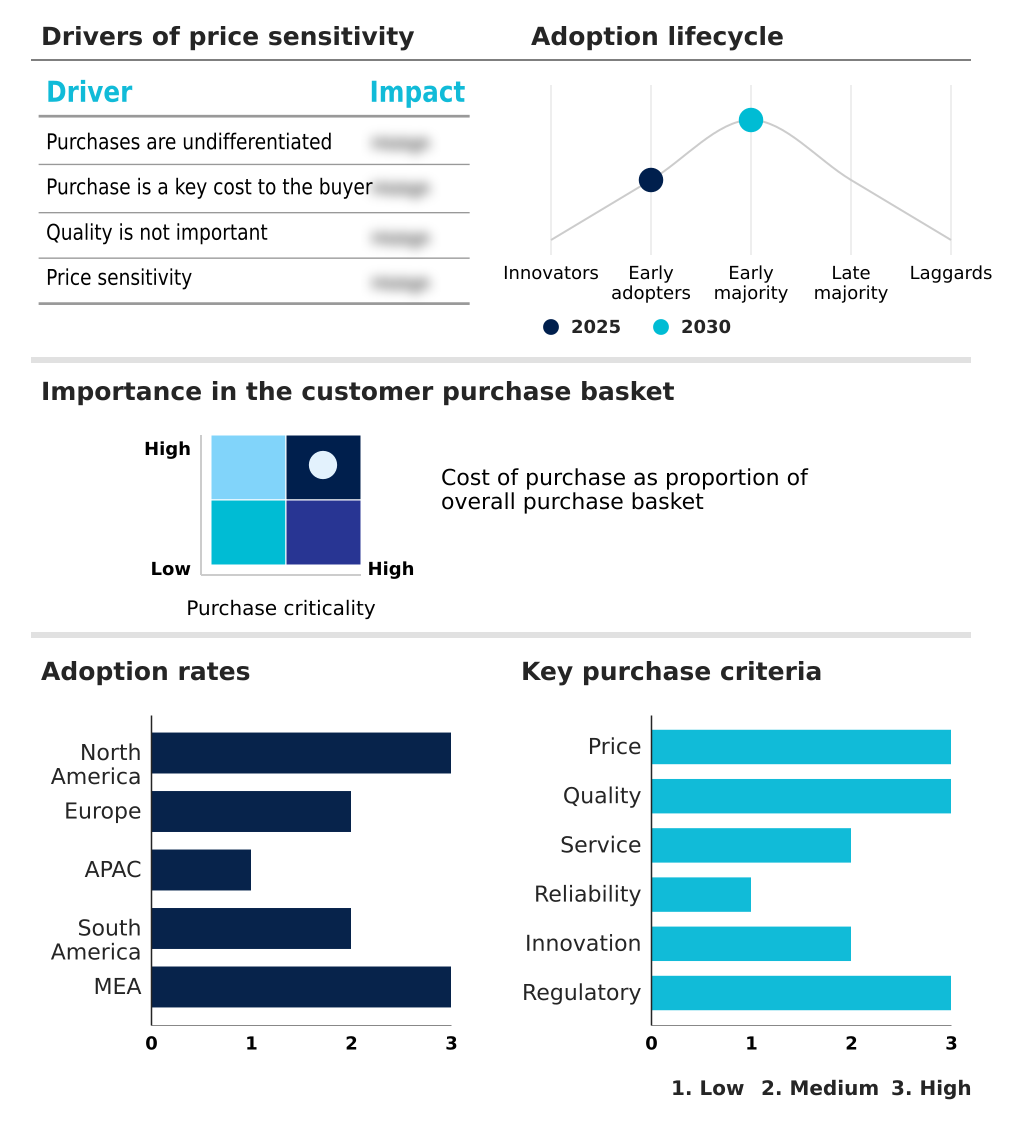

Exclusive Technavio Analysis on Customer Landscape

The ai-specific server market forecasting report includes the adoption lifecycle of the market, covering from the innovator’s stage to the laggard’s stage. It focuses on adoption rates in different regions based on penetration. Furthermore, the ai-specific server market report also includes key purchase criteria and drivers of price sensitivity to help companies evaluate and develop their market growth analysis strategies.

Customer Landscape of Ai-specific Server Industry

Competitive Landscape

Companies are implementing various strategies, such as strategic alliances, ai-specific server market forecast, partnerships, mergers and acquisitions, geographical expansion, and product/service launches, to enhance their presence in the industry.

Advanced Micro Devices Inc. - Vendors offer high-performance AI-specific servers featuring GPU acceleration, liquid cooling, and scalable architectures designed for end-to-end AI development and demanding workloads.

The industry research and growth report includes detailed analyses of the competitive landscape of the market and information about key companies, including:

- Advanced Micro Devices Inc.

- ASUSTeK Computer Inc.

- Cerebras Systems Inc.

- Cisco Systems Inc.

- Dell Technologies Inc.

- Gigabyte Technology Co. Ltd.

- Hewlett Packard Enterprise Co.

- IBM Corp.

- Infineon Technologies AG

- Intel Corp.

- Inventec Corp.

- Lenovo Group Ltd.

- New H3C Technologies

- NVIDIA Corp.

- Penguin Solutions Inc.

- Quanta Computer Inc.

- Samsung Electronics Co. Ltd.

- Super Micro Computer Inc.

- Wiwynn Corp.

Qualitative and quantitative analysis of companies has been conducted to help clients understand the wider business environment as well as the strengths and weaknesses of key industry players. Data is qualitatively analyzed to categorize companies as pure play, category-focused, industry-focused, and diversified; it is quantitatively analyzed to categorize companies as dominant, leading, strong, tentative, and weak.

Market Intelligence Radar: High-Impact Developments & Growth Signals

- In the Application Software industry, the increasing adoption of cloud-native and subscription-based software models is influencing the AI-specific server market by driving demand for elastic and scalable AI infrastructure-as-a-service platforms that can support on-demand computational workloads.

- Growing data security and privacy concerns within the Application Software industry, particularly around cloud services, are accelerating the adoption of sovereign AI infrastructure and on-premises AI-specific servers that offer enhanced control and compliance with data residency protocols.

- The proliferation of data and analytics tools within enterprise application software is creating a downstream requirement for powerful AI-specific servers, as businesses need robust hardware for machine learning operations and to process massive datasets for business intelligence and predictive modeling.

- The rising automation of business processes through Application Software, such as RPA and BPM, is fueling demand for AI-specific servers capable of handling real-time inference workloads and supporting intelligent automation at scale within enterprise environments.

Dive into Technavio’s robust research methodology, blending expert interviews, extensive data synthesis, and validated models for unparalleled Ai-specific Server Market insights. See full methodology.

| Market Scope | |

|---|---|

| Page number | 327 |

| Base year | 2025 |

| Historic period | 2020-2024 |

| Forecast period | 2026-2030 |

| Growth momentum & CAGR | Accelerate at a CAGR of 45.2% |

| Market growth 2026-2030 | USD 769749.9 million |

| Market structure | Fragmented |

| YoY growth 2025-2026(%) | 18.1% |

| Key countries | US, Canada, Mexico, Germany, UK, France, Italy, The Netherlands, Spain, China, Japan, India, South Korea, Australia, Indonesia, Brazil, Argentina, Chile, Saudi Arabia, UAE, South Africa, Israel and Turkey |

| Competitive landscape | Leading Companies, Market Positioning of Companies, Competitive Strategies, and Industry Risks |

Research Analyst Overview

- The AI-specific server ecosystem is a highly interdependent value chain, with semiconductor designers providing over 85% of the core AI accelerators that define system performance. These components flow to original design manufacturers (ODMs) and original equipment manufacturers (OEMs), who assemble them into rack-scale systems.

- These integrated systems are then procured by the primary end-users—hyperscale cloud providers and large enterprises—who now demand complete, pre-validated AI factory solutions rather than individual components. This shift has increased the importance of MLOps platforms and AI-driven orchestration software, which can improve resource utilization by up to 40%.

- The entire ecosystem is shaped by the availability of critical technologies like high-bandwidth memory and advanced packaging, with supply bottlenecks directly impacting production timelines and market pricing.

What are the Key Data Covered in this Ai-specific Server Market Research and Growth Report?

-

What is the expected growth of the Ai-specific Server Market between 2026 and 2030?

-

The Ai-specific Server Market is expected to grow by USD 769.75 billion during 2026-2030, registering a CAGR of 45.2%. Year-over-year growth in 2026 is estimated at 18.1%%. This acceleration is shaped by strategic proliferation of generative ai and large-scale training clusters, which is intensifying demand across multiple end-use verticals covered in the report.

-

-

What segmentation does the market report cover?

-

The report is segmented by Processor Type (CPU-based, ASIC-based, GPU-based, and FPGA-based), Component (Hardware, Software, and Services), Deployment (On-premises, and Cloud), End-user (IT and telecom, Automotive, BFSI, Healthcare, and Others) and Geography (North America, Europe, APAC, South America, Middle East and Africa). Among these, the CPU-based segment is estimated to witness significant growth during the forecast period, driven by rising adoption across key application areas. Each segment includes detailed qualitative and quantitative analysis, along with historical data from 2020-2024 and forecasts through 2030 with year-over-year growth rates.

-

-

Which regions are analyzed in the report?

-

The report covers North America, Europe, APAC, South America and Middle East and Africa. North America is estimated to contribute 34.6% to market growth during the forecast period. Country-level analysis includes US, Canada, Mexico, Germany, UK, France, Italy, The Netherlands, Spain, China, Japan, India, South Korea, Australia, Indonesia, Brazil, Argentina, Chile, Saudi Arabia, UAE, South Africa, Israel and Turkey, with dedicated market size tables and year-over-year growth for each.

-

-

What are the key growth drivers and market challenges?

-

The primary driver is strategic proliferation of generative ai and large-scale training clusters, which is accelerating investment and industry demand. The main challenge is intensification of thermal management complexity and power grid constraints, creating operational barriers for key market participants. The report quantifies the impact of each driver and challenge across 2026 and 2030 with comparative analysis.

-

-

Who are the major players in the Ai-specific Server Market?

-

Key vendors include Advanced Micro Devices Inc., ASUSTeK Computer Inc., Cerebras Systems Inc., Cisco Systems Inc., Dell Technologies Inc., Gigabyte Technology Co. Ltd., Hewlett Packard Enterprise Co., IBM Corp., Infineon Technologies AG, Intel Corp., Inventec Corp., Lenovo Group Ltd., New H3C Technologies, NVIDIA Corp., Penguin Solutions Inc., Quanta Computer Inc., Samsung Electronics Co. Ltd., Super Micro Computer Inc. and Wiwynn Corp.. The report provides qualitative and quantitative analysis categorizing companies as dominant, leading, strong, tentative, and weak based on their market positioning. Company profiles include business segment analysis, SWOT assessment, key offerings, and recent strategic developments.

-

Market Research Insights

- The competitive landscape for AI-specific servers is undergoing an accelerated consolidation around rack-scale solutions, with the top three vendors now commanding over 60% of the enterprise AI infrastructure segment. This market is shaped by a hyper-competitive innovation cycle where integrated systems are replacing standalone server sales.

- Key vendors are launching turnkey AI Factory platforms that combine specialized silicon, high-speed networking, and modular data center infrastructure to reduce deployment times by half. These developments directly address enterprise demand for faster pilot-to-production timelines for generative AI. However, this progress is tempered by persistent supply chain bottlenecks for critical components like high-bandwidth memory.

- In response, companies are diversifying their sourcing strategies and investing in long-term supply agreements to ensure production continuity and meet the surge in demand for sovereign AI-ready infrastructure.

We can help! Our analysts can customize this ai-specific server market research report to meet your requirements.

RIA -

RIA -